The AI Governance Practitioner Program

AI governance starts with knowledge. But success depends on skillful application, judgment, and the ability to influence decisions. It demands real and demonstrated practitioner capability.

How we build your capability.

Most training courses focus on accumulation of disconnected knowledge. You pick a course, complete it, pass a test, pick another. There's no developmental logic connecting them, no shared methodology running through them, and no formal way to demonstrate your capability, not just your knowledge, has been tested. And they provide no structured path to career success. This program is very different.

Breadth

to understand

The Foundation Track builds cross-domain capability first across technology, risk, organizational and governance design. You learn to apply the knowledge and core practices of adaptive governance. This shared foundation of knowledge, skills and resources enables you to be effective as a starting practitioner.

Depth

to specialize

Six specialty courses help you go deep in the areas that match your career direction: Compliance, Risk, Evaluation, Engineering, Operations, and Leadership. Each one builds on the foundation's shared methodology and applies it through the lens of a specific governance discipline, with its own practical toolkit. Successful assessment leads to recognition with a Specialist Practitioner Award.

Mastery

to lead

Master Practitioner is earned through expertise in multiple specialties, verified through assessment and a formal interview. But recognition is only part of it. Master Practitioners can gain opportunities to write, teach, and coach with AI Career Pro - building practical experience, professional profile, and a track record that opens doors. It's where capability becomes a career.

“The AI training market is saturated with introductory courses that hover at a high level and never translate into real-world execution. They talk about governance, but don’t give you the tools to implement it.

AI Career Pro is completely different. It’s clear the curriculum was built by people who truly understand the job from the inside out. It moves beyond abstract concepts and focuses on the practical how-to of day-to-day operations.

That shift from theory to practice has been a game-changer for my consulting work. It gave me a concrete structure to operationalize AI governance for companies across LATAM, turning regulatory requirements into engineering realities.

If you want to understand how governance is actually built inside an organization, this is the place.”

If you want to understand how governance is actually built inside an organization, this is the place.”

RODRIGO ZIGANTE, Chile

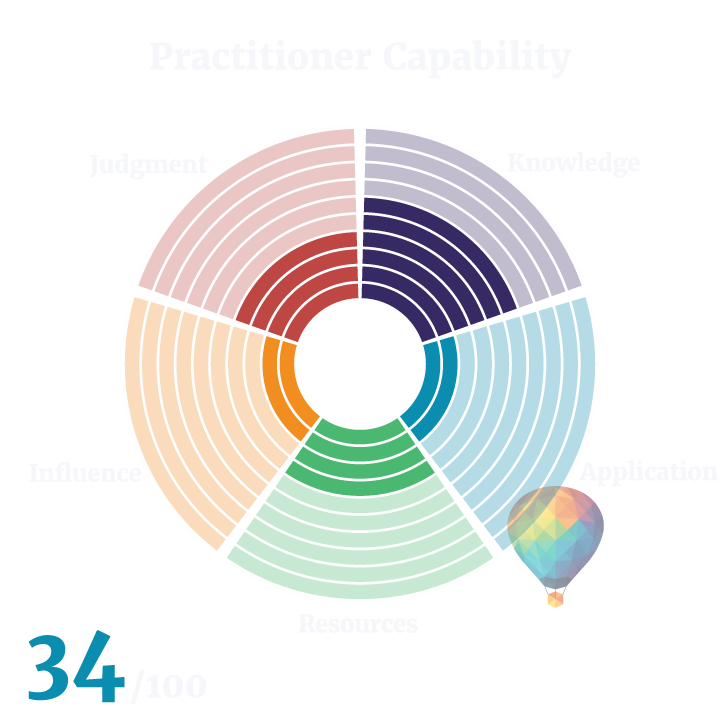

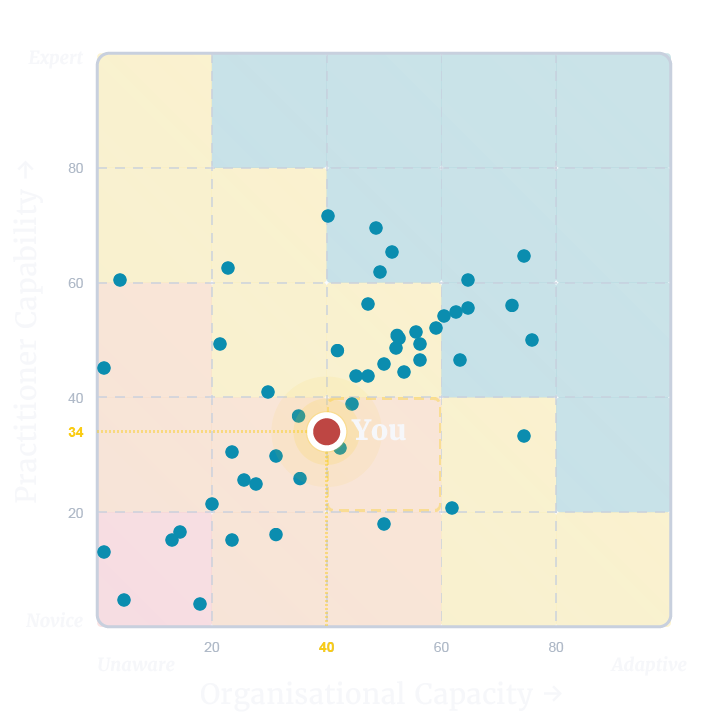

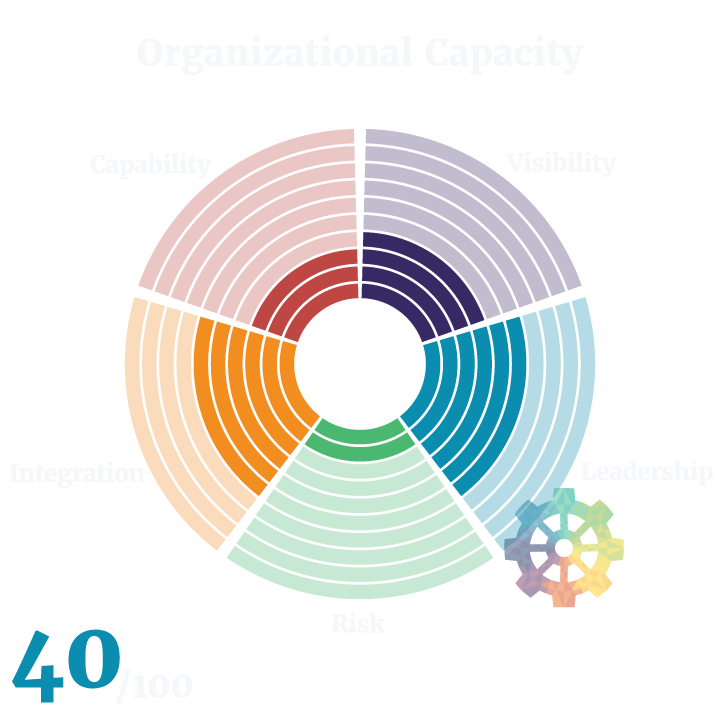

Step 1. Know where you stand.

Before you commit to a course or a track, you need an honest picture of where your capability actually sits. The AI Governance Practitioner Capability Assessment gives you that. It identifies what you know, where the gaps are, and which part of the program is the right starting point for you. It's free, it's fast, and it removes all the guesswork.

Step 2. Understand what the work actually involves.

Doing the Work of AI Governance FREE Course

Write your awesome label here.

Step 3. Build your foundations.

Foundation Track. Four courses. Self-paced or in a practitioner cohort.

Write your awesome label here.

Step 4. Go deep into your specialty.

Six courses: Compliance. Risk. Engineering. Evaluation. Operations. Leadership.

Individual Courses

Tracks, Bundles & Practitioner Cohorts

Frequently asked questions

Are your courses regularly updated?

Yes! Our school is committed to creating and continuously improving effective learning resources. All course feedback is reviewed and actioned, and we do a complete review of each course for accuracy and currency every 6 months.

Do I get a certificate for your online courses?

Yes! You will get a certificate for the completion for any online course you complete.

What if I have more questions that are not answered here?

Please, send your questions to grow@aicareer.pro and we will respond as soon as possible.

Do I need any prior experience in AI governance to start?

No. The free course, Doing the Work of AI Governance, assumes no prior experience and is designed as the starting point. The Foundation Track builds from there. If you already have experience, the free assessment will show you where you stand and recommend where to focus.

What's the difference between a Certificate of Completion and a Practitioner Award?

A Certificate of Completion confirms you've worked through the course material. A Practitioner Award confirms your capability has been tested through a graded assessment in a Practitioner Cohort. Only the Practitioner Award counts toward the Master Practitioner pathway.

Can I start self-paced and switch to a cohort later?

Yes! Subject to available capacity. Your progress carries over. You'll join the cohort and complete the graded assessment at the end to earn the Practitioner Award.

I already have the AIGP certification. Is the Foundation Track still useful?

The AIGP tests knowledge. The Foundation Track builds practical capability: mechanism design, policy writing, governance structure design, and the adaptive governance methodology that connects them. Many AIGP holders find significant gaps between what they know and what they can do in practice. The assessment will tell you where you stand.

I'm a lawyer. Are these courses relevant to me?

Yes. AI governance sits at the intersection of law, technology, risk, and organisational design. The Foundation Track is specifically designed to build cross-domain capability regardless of your starting discipline. The Compliance and Leadership specialties are particularly relevant, but legal professionals benefit from understanding how governance is engineered and operationalised, not just documented.

How much time should I expect to invest?

Each Foundation Track course is roughly five hours of video plus exercises and reading. Each specialty is eight to eleven hours of video plus exercises and reading. In a Practitioner Cohort, expect to commit eight weeks at five to six hours per week. Self-paced learners set their own schedule.