Apr 24

/

James Kavanagh

The Three Leaps in Your AI Governance Career

What the data from 207 practitioner assessments (so far) tell us about the transitions that define an AI governance career

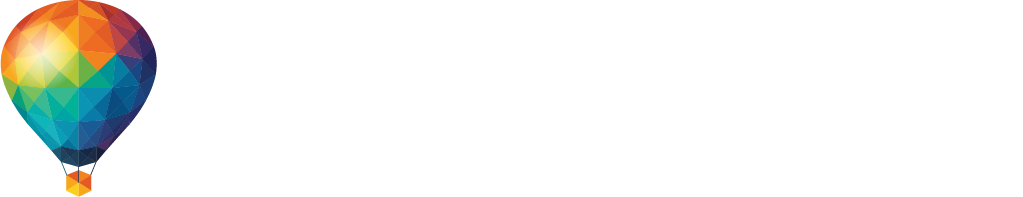

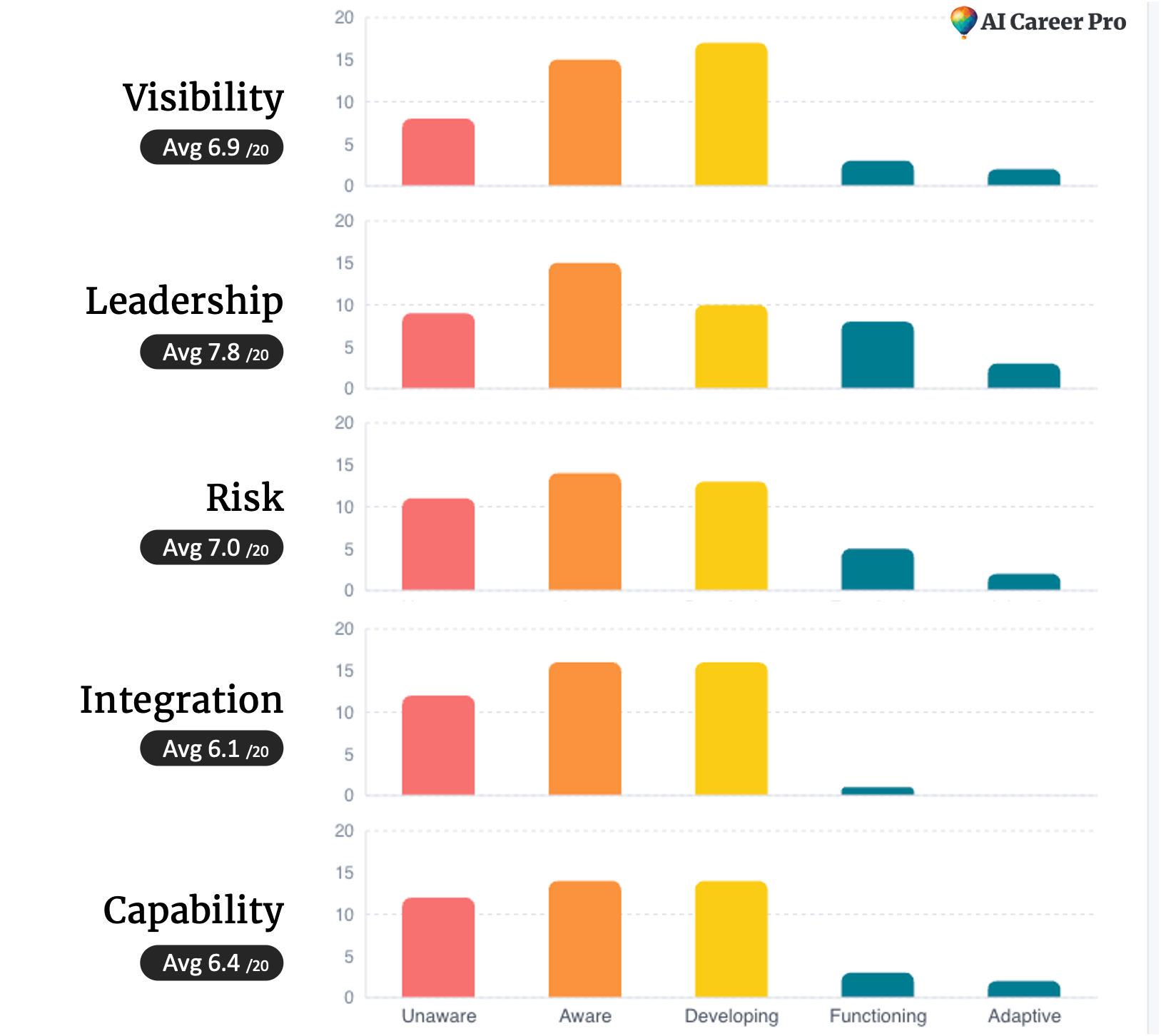

We've now had over two hundred practitioners complete the Adaptive Governance Assessment since we launched it 2 weeks ago, and the results are painting a remarkably consistent picture. Practitioners at every level are getting stuck in the same places, and seemingly for the same reasons. Knowledge runs ahead of application: 87% of early-stage practitioners score significantly higher on what they know than on what they've built. For developing practitioners, Application runs far ahead of influence: over half of mid-career practitioners have Influence or Judgment as their weakest dimension. And individual capability runs ahead of organisational capacity. The average organisational score is just 34 out of 100. Leadership is the strongest dimension at 7.8 out of 20, still only at an Aware level of maturity, while Integration, the extent to which governance is actually embedded operationally, is the weakest at 6.1. The aspiration is there, but the operational reality isn't.

I recognise the pattern because I've lived it, across over two decades in engineering and governance at Microsoft and Amazon. And I suspect you'll recognise it too, wherever you are in your career, because the transitions from knowledge to practice to adaptation to leadership follow the same shape regardless of the domain. The challenges I see in the data are the same ones I had to traverse in my own career, each one uncomfortable, each one necessary. They're also the reason we've built AI Career Pro the way we have. Here's the story, and here's what the data tells us about where you might be standing right now.

You can explore this data yourself by taking the Adaptive Governance Assessment. It's free and just takes about fifteen minutes. I'll keep sharing what the results tell us as the dataset grows, so take this article as just a quick, first take.

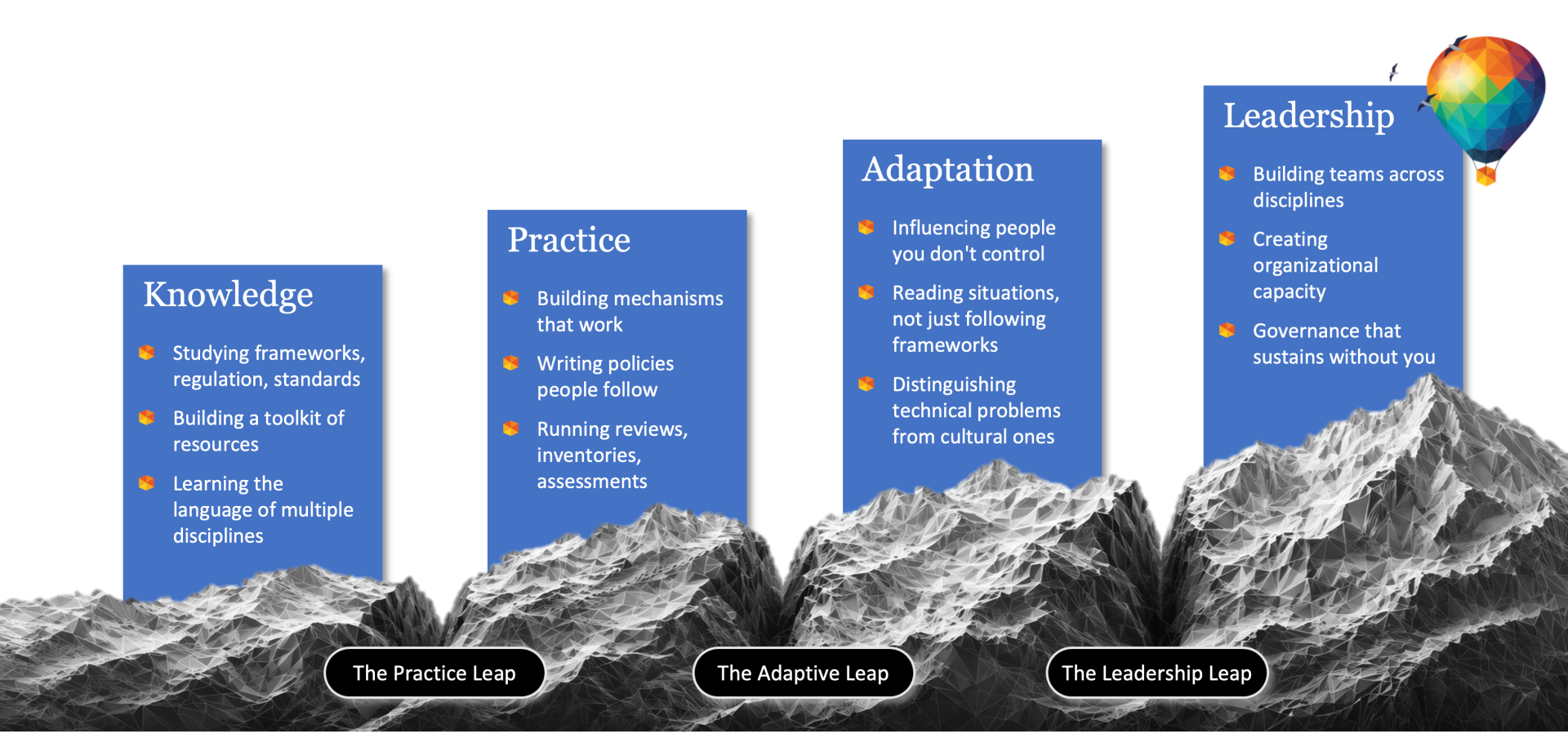

The Practice Leap: From knowledge to practice

I started in chemical engineering, working in safety and simulating disasters. Process, discipline, theory and rigour. But after some years working on oil refineries and chemical plants around the world, I decided to move into software engineering, and I threw myself into that learning with everything I had. I got more than a dozen certifications in Oracle, IBM, and Microsoft technologies, studying every morning and every evening. I knew the theory, passed all the exams. I felt like I understood how systems should be designed.

Then I got my first software engineering role, and I discovered something humbling. Knowing how a system should be designed and actually building one are completely different things. The textbook told me how to architect a solution. Reality gave me legacy systems, messy integrations, constraints nobody had written about, and colleagues with very different assumptions from mine. Written under my monitor at home I had scribbled: "You have no idea what you're doing. Do it anyway."

So I cycled through for years: Learn from the book, apply it, hit reality, go back to the book, refine, apply again. For me this was the reality of perhaps five years across multiple transitions, from the UK to Ireland to emigrating to Australia, then into Microsoft. Each move was the same grinding pattern: knowledge meeting reality, and reality winning until I'd built enough craft to close the gap. And it's not a transition that happens just once or in one clean step. Over the course of multiple pivots into security, into consulting, into product engineering, I kept refining that same capability, building confidence through doing rather than studying.

One of those pivots came because of an inspiring leader in Microsoft, Michael Gration, saw depth in one domain and challenged me to build it in others. He asked me to lead as Security Officer for Microsoft Australia, even though it meant starting from scratch again in a field I'd never worked in. It was the practice leap all over again: new domain, new knowledge gap, same grinding cycle of learning and applying until the craft caught up with the aspiration.

The lesson was hard-won, and it's the same lesson that every practitioner eventually learns. Knowledge has a ceiling. You can study frameworks and pass exams and feel prepared, but until you apply what you know to something real, with real constraints and real consequences, you haven't built capability. You've built confidence, sometimes misplaced confidence. And confidence without practice is fragile.

What the data shows.

The practice leap is the clearest pattern in the assessment data. Among Novice and Emerging practitioners, 87% score higher on Knowledge than Application. At Novice level, the average Knowledge score is 4.5 out of 20, while Application sits at just 1.3. Forty percent of Novice respondents scored zero on Application. They've studied, they've read the frameworks, some have passed the AIGP, but they haven't built anything yet.

Knowledge is the highest dimension for two thirds of Novice practitioners. They're standing on one side of the first chasm, and they can feel the gap even if they're not sure what to do about it. The certification prepared them. The work hasn't started. And if you've ever walked into an interview with nothing in your briefcase but a fat textbook and a bunch of certificates (as I have), you know exactly what that feels like.

The Adaptive Leap: From building to influencing

By the time I was leading security and assurance work at Microsoft, I had mastered the practice. I had the knowledge, the expertise, and the confidence to engage with technical teams and hold my own. With the emergence of cloud services, we'd built a really strong program around trust and assurance, and I was proud of what we'd achieved. The results were impressive, with Microsoft seeing some of the fastest adoption of cloud and highest levels of trust anywhere in the world.

Then I wanted to scale it. I wanted to convince the rest of Microsoft that our approach to trust was the right one, and the approach was counterintuitive: instead of telling customers why they should trust us, we needed to start by listening to what each customer actually meant by trust. For some it was accountability. For others it was responsiveness, or credentials, or transparency. Every customer was different, and we'd figured this out through the hard work of actually listening. I wanted that insight to spread across Microsoft globally.

So I went around trying to convince people. I presented the logic, I explained the approach, and it didn't work. I had the right answer (or at least I believed I did), but I just could not move the needle through knowledge and reason alone. I was hitting a different kind of wall, and it wasn't a practice problem. It was a people problem.

The turning point came when Satya Nadella was giving one of his open forums in Seattle. I asked him: what does it mean for Microsoft to move from saying we're trustworthy to actually being felt as trustworthy by our customers? He gave a standard answer about how deeply Microsoft invests in security, our credentials, etc. I didn't find it satisfactory.

So I did something I woudn't have normally done. Thankful to the mentorship of an inspiring leader, Scott A. Edwards, who'd been pushing me to think differently about influence, I emailed Satya to let him know his answer was wrong. I laid out a different approach, one that started with listening to what each customer means by trust, not what we think they should value.

To my surprise, he emailed back, copying many of his senior executives. He wrote very simply: "James is right. We should do this."

That moment changed how I understood professional impact. It taught me something that no certification or course could have taught me: influence, judgment, timing, relationships, and the capacity to change human behaviour are not secondary to good governance. They're fundamental to it. Some problems can't be solved by engineering the right solution. They have to be solved by taking action, by seeing what happens, by influencing people and culture and behaviour rather than simply specifying the right design. That's adaptive work. And it requires a kind of judgment that you cannot get from a textbook.

What the data shows.

Across all assessment respondents, Judgment is the weakest dimension. Forty-one percent of practitioners have it as their lowest score, and Influence is next at thirty-four percent. These are the two dimensions that define the adaptive leap, and they're where practitioners struggle most.

At Developing level, the pattern sharpens. More than half of Developing practitioners have either Influence or Judgment as their weakest dimension. Their Knowledge, Application, and Resources scores are solid enough. They can build governance. But they haven't yet learned to navigate the human side of it: the politics, the stakeholder management, the ability to read a room and know when to push hard and when to compromise. They're standing at the edge of the second chasm. Some are staring into it, some averting their eyes, but I think most of them know it's there.

By the time practitioners reach Proficient - for the few that do - the picture changes considerably. Scores balance out across all five dimensions, averaging around 13 to 14 on each. The leap has been crossed. Knowledge and practice are still there, but Influence and Judgment have caught up. These practitioners have learned to read situations, not just follow frameworks. And that shift shows up clearly in the data.

The Leadership Leap: From capability to capacity

Now I left Microsoft in 2019 and made a big change to join Amazon Web Services. There I was leading regulatory engagement for Europe. A small team, focused mission, deep expertise. Our responsibility was preparing for the complex regulatory changes happening in Europe, guiding engineering through the changing requirements, and proving the validity of assurance claims. I knew the landscape, I could navigate complex stakeholder dynamics, and I was confident in my judgment and my ability to influence outcomes.

Then in a matter of months, I was asked to lead all global regulatory engagement. With the benefit of mentorship from another inspiring and very different leader, Oliver Bell, I stepped up to leading forty-five people across twenty-five countries in almost every timezone. They all had vastly different regulatory contexts to deal with, skill sets, cultures, and working styles. Every one of them senior, experienced professionals, some of the best I'd ever worked with, all of whom knew their local space better than I ever could. Masterful practitioners.

I couldn't rely on my own depth of direct knowledge or even judgment anymore. I couldn't be the person solving problems, and I certainly couldn't influence my way through every conversation with a team of forty-five spread across the world. I had to make a fundamentally different kind of leap.

I had to crystallize unifying ideas that forty-five different senior professionals could all align to and act on. One was to focus our objective on making the market addressable. Identify every individual blocker to a customer trusting and adopting AWS, and measure our impact in terms of removing those blockers, one by one, with the tactics for doing so best decided locally. Another was to build culture through mechanisms of continuous improvement. We blended Amazon mechanisms and Kaizen, practices of small changes on rapid cycles, as a way to keep everyone engaged in the process of getting better, across all that diversity and distance. With such a complex, varied landscape and such a range of people and contexts, I couldn't impose a single way of working. I had to create the conditions for forty-five experienced professionals to find their own paths within a shared direction.

That meant moving from "me and my practice" to "we and our culture." From being an effective practitioner to building organizational capacity. It was the hardest leap of the three, and the one I see the fewest practitioners preparing for. Governance at scale isn't about your capability. It's about building organizational capacity, creating conditions where experienced people across the world can do their best work together embedded with the teams that actually design and build AI systems.

What the data shows.

Sixty-one percent of assessment respondents sit at Novice or Emerging, at or before the first leap. Less than ten percent self-assess as having reached Proficient levels. The field is young, and most practitioners are still early in their journey, which means very few have even begun to confront the leadership leap.

The organisational assessment results tell the story of the third leap. The average organisational capacity score is just 34 out of 100, and even the strongest dimension, Leadership, sits at only 7.8 out of 20, an Aware level of maturity with wide variation between respondents. Some organisations have strong leadership mandate. Many have almost none. But the most telling finding is that Integration, the extent to which governance is embedded into how AI systems are actually built, deployed, and operated, is the weakest dimension at 6.1 out of 20. The aspiration exists. Operational governance doesn't - at least not yet.

This is where the two assessments reveal something that neither can show alone. Many experienced practitioners discover that their own capability is well ahead of the organisation they're trying to lead. They can do the work brilliantly. But the organisation hasn't built the visibility, the risk capability, or the operational integration to sustain governance without depending on them. That's the third chasm, and it's the one that determines whether governance survives your departure or collapses when you leave.

Why AI Career Pro is built this way

These three leaps are not unique to my career. Every practitioner I work with faces them, and at each step they feel just as uncomfortable as I did, just as in need of coaching and guidance and a clear direction forward. I am grateful to the mentors and opportunities I had. That experience is a big part of why we've built AI Career Pro the way we have.

The Foundation Track is designed for the practice leap. It takes knowledge, the frameworks and standards and regulation and technology, and teaches you how to actually apply it. How to write a policy that people follow, how to build an inventory that functions, how to design a mechanism that works under pressure. The focus is on that (sometimes) grinding, iterative transition from knowing what governance should look like to being able to build it, because that's where most practitioners do get stuck and where the assessment data shows the widest gap.

The Specialty Courses, covering Compliance, Risk, Engineering, Operations, Evaluation and Leadership, are designed for the adaptive leap. They go deeper into specific domains, yes, but more importantly they teach you to work across disciplines you don't control. To read situations and distinguish technical problems from cultural ones. To build mechanisms that adapt as your context changes. To influence people whose priorities differ from yours, and to exercise judgment when the evidence is incomplete. These are the capabilities that the data shows practitioners struggle most to develop, and they don't come from studying. They come from guided practice in increasingly complex situations.

The Master Practitioner pathway and Enterprise Coaching are designed for the leadership leap. Building organizational capacity, creating conditions where governance works as a distributed capability, not something that depends on one brilliant, hard working person. Leading across contexts you don't fully control, building culture and sustaining it. For me, this is the work that determines whether governance lasts beyond your involvement, and it's the work that the field needs most as it matures.

The hard truth - this takes real work.

Real capability in AI governance is built over years of applied work, practice, failure, reflection, and guided development. It does not come from a multiple choice exam and a certificate. AIGP, ISACA, the multitude of professional certifications emerging to stamp your knowledge. They're worthwhile but they're only a starting point. They're not enough to advance to mastery within a meaningful, rewarding career in AI governance.

You have to prepare for and make these three genuine leaps. The practice leap, where you learn that knowing and doing are different things. The adaptive leap, where you learn that solving technical problems and influencing people towards change are very different things. The leadership leap, where you learn that the real work is building teams and embedding governance deeply enough that it sustains without you

Each leap is uncomfortable. Each one makes you feel like an imposter (or at least it sure did for me). Each one can be made easier with mentorship, community, clear direction, and time. But each one is necessary. And the work along the way is some of the most meaningful and necessary work in technology right now.

If you're at the start of this journey, know that you're not alone in feeling uncertain. The discomfort is real and it's normal. And there is a pathway through it. It just takes time, practice, community, and the willingness to keep learning.

If we can, that's what we're here at AI Career Pro to help with.

And of course, you can take a first step by doing the assessment and diving into this data yourself at assess.aicareer.pro.

Thank you for reading.