In 1995, a man named McArthur Wheeler robbed two banks in Pittsburgh in broad daylight. No mask, no disguise. He just walked up to the counter, demanded the money, and walked out. And that afternoon, he did it again. When police later arrested him after surveillance images were broadcast, Wheeler was baffled. "But I wore the juice," he said.

He had covered his face in lemon juice. Someone had told him that lemon juice could be used as invisible ink, and Wheeler had reasoned, with full confidence, that if it made writing invisible on paper, it would make his face invisible on camera. He had even tested it beforehand, taking a Polaroid selfie with lemon juice on his face. Seeing a blurry photo, he thought "Good enough."

That story found its way to a psychologist at Cornell named David Dunning, who read about it and thought: this isn't just a man doing something stupid. This is a man who lacks the very knowledge he would need to recognize what he doesn't know. So together with his colleague Justin Kruger, Dunning went on to publish a widely cited psychology paper. Their finding, now known as the Dunning-Kruger effect, sounds obvious once you hear it: in any given domain, the people with the least competence are the ones who most overestimate their competence. Not because they're foolish. Because the skills you need to assess your capability are the same skills you're missing.

Working in AI governance, bias comes up a lot, and Dunning-Kruger especially features. But not for the reason you might expect, in model evaluations or human oversight. And not because the people I talk to are robbing banks with lemon juice on their faces. But because, at some point or another, we're all wearing the juice. We all have domains where the gap between what we think we know and what we actually know is wider than we'd like to admit. I know for sure in hindsight, there have been times when I wore the juice. And I keep having two versions of the same conversation where that gap is on full display.

I regularly talk to aspiring AI governance practitioners who have done real work to get where they are. They've built careers in their own domains - lawyers, engineers, executives, auditors - they bring genuine professional experience. And they've invested in the IAPP AIGP certification on top of that. That represents a serious investment, and it matters. But some of them are disappointed that there isn't a job waiting for them on the other side. I have to be honest with them: certification puts you at the start line, not the finish. It gives you baseline knowledge. What it doesn't give you is the practitioner capability to actually do governance work, the resources to do it well, the influence and judgment that grow from experience. The kind of capability that delivers outcomes, and is hired for. Building that capability that takes sustained effort beyond any certification, and most people underestimate how much.

If you're working through that pivot yourself, I wrote separately about what it actually takes: Landing your first job in AI Governance.

All too often, I have similar conversations with leaders in organizations, though this one generally starts from a different place. They've come to believe or been told, usually by consultants or vendors, that AI governance means compliance. Get certified to ISO 42001. Prepare for your EU AI Act conformity requirements. Buy a platform that automates your risk assessments. The advice sounds reasonable, and the people giving it are often credible, though some have been liberally applying the juice. But it frames governance as a project with a deliverable, and that framing is wrong. Compliance is an output of governance, not the thing itself. An organisation that builds its entire governance program around achieving a certification or passing a regulatory conformance requirement has optimised for the wrong target. When the regulation changes, when a new risk emerges that no standard anticipated, when an incident happens that doesn't fit neatly into an existing control, that organization has nothing to fall back on. The compliance artefacts look impressive. But the underlying capacity to actually govern was never built. And more importantly, they've missed the real potential of better governance: faster innovation, stronger trust, and better competitive differentiation. They just have a stack of paperwork and an out of date certificate to hang on the wall.

To these organizational leaders my advice is simple: effective governance is a question of capacity, and you have to build that capacity inside your organization. You can't buy it, and you can't outsource it. The certification or the compliance outcome might be a useful milestone along the way, but it's not the destination. And it's not where the value lies.

Now neither group is McArthur Wheeler. They've done real things. But I think both are further from where they think they are than they realize, and the reason is the same one Dunning and Kruger identified: you need a certain level of capability to accurately assess your own capability. Without it, you can't see the gaps. You reach for the juice. And that raises the question that I think both groups are really asking, in different forms: where do we actually stand, and what should we do next?

That's harder to answer than it sounds. AI governance is not a single capability you either have or you don't. It's this wonderful, wicked set of interlocking capacities that mature at different rates. An organisation might have strong leadership commitment but no visibility into its AI systems. A practitioner might have deep technical knowledge but no ability to influence the decisions that matter. Knowing your overall governance capability is low isn't particularly useful. Knowing that your visibility is strong, your risk sensing is developing, but your integration is almost non-existent? That's more actionable.

So I've developed an assessment framework that tries to answer these questions. Not a maturity model in the traditional sense, because most maturity models describe an idealized end state that nobody actually reaches and leave you feeling bad about the distance. This is more diagnostic than aspirational. We've put it together to help tell you where you are, specifically, and point you and your organization forward to what you can do next.

Two Assessments, One Problem

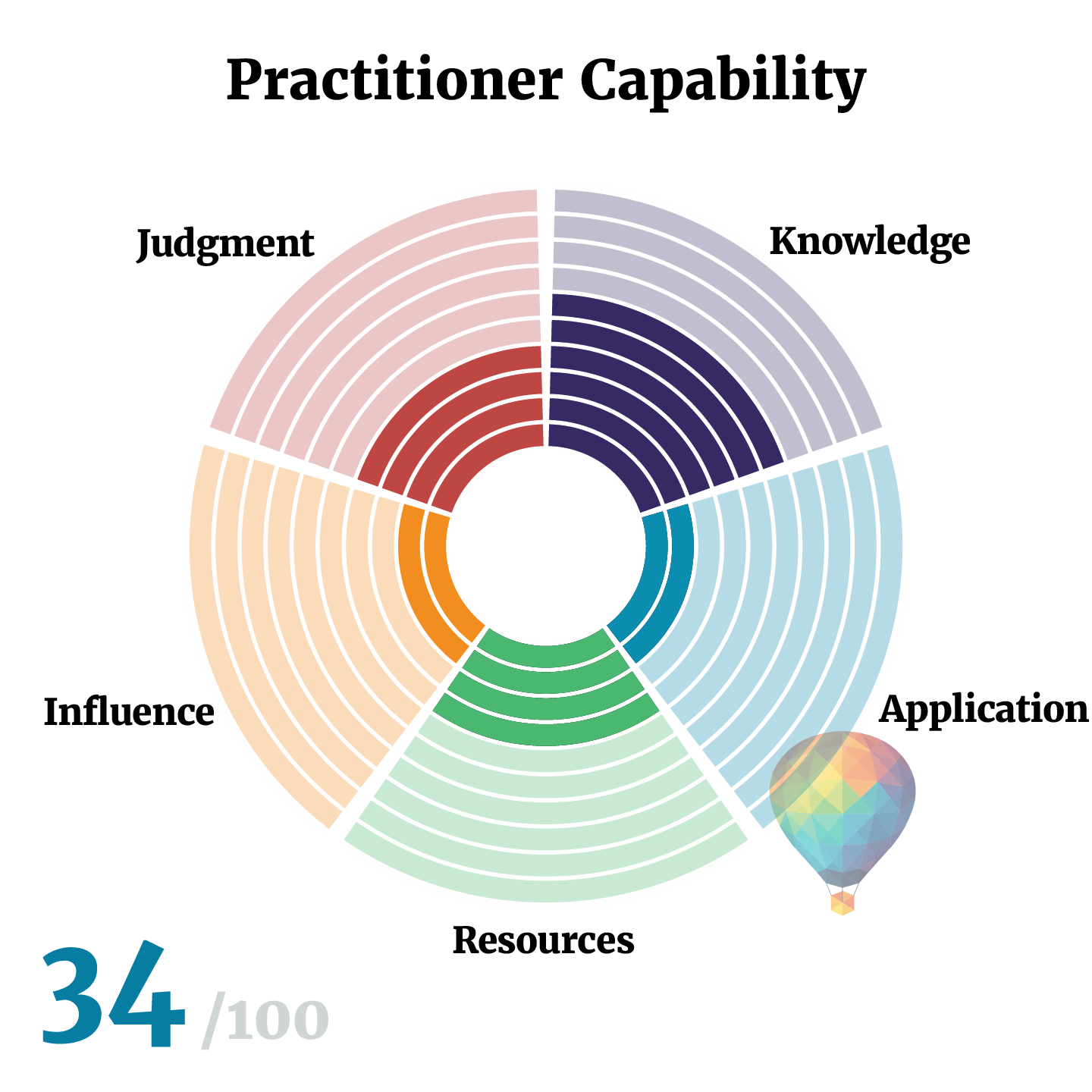

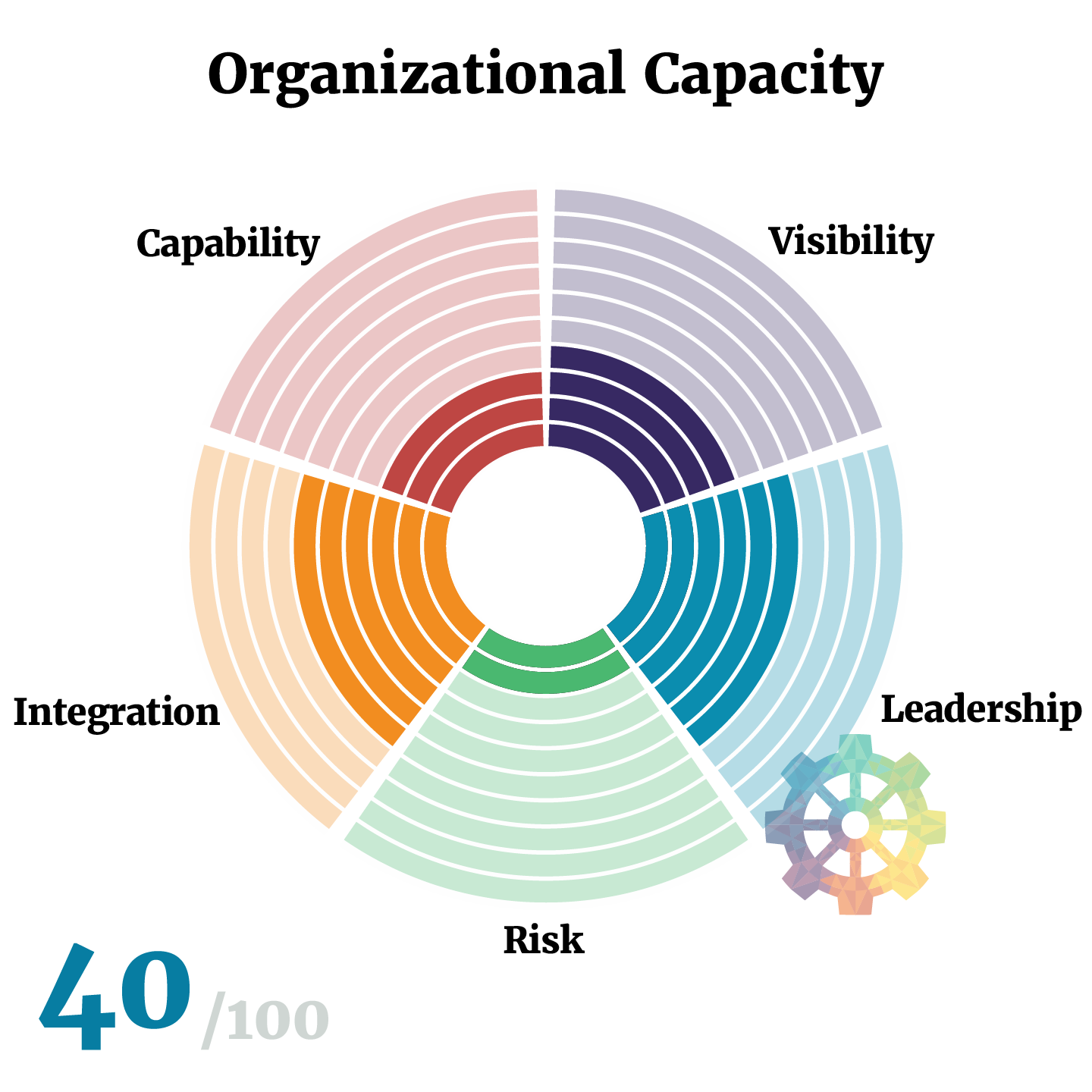

The framework comprises two separate instruments. The first measures practitioner capability: do the people doing governance have the skills, knowledge, resources, influence and judgment to do it effectively? The second measures organisational capacity: does your organisation have the structural, strategic, and operational foundations that make effective governance possible and sustainable?

I separated these deliberately, because they measure different things and they fail in different ways. I've seen organisations with all the organizational capacity you could ask for: executive sponsorship, budget, governance, safety culture, committees, reporting lines. The capacity was there. But the practitioners trying to operate within that structure couldn't actually do the work. They didn't have the technical literacy to engage engineers, to translate regulatory requirements. They didn't have the risk expertise to design meaningful assessments or the applied knowledge and experience to make good judgments. They had authority on paper and no credibility in practice. The result was governance theatre: impressive structure, hollow outcomes.

I've seen the reverse more often. Brilliant practitioners, deep expertise, genuine understanding of both the technology and the governance challenges. And no organisational capacity behind them. No budget. No mandate. No seat at the table where decisions are actually made. They produced excellent analysis that nobody read and thoughtful recommendations that nobody followed. In some organisations, governance exists as a silo that is quietly responsible for maintaining an elegant fiction: the policies, the documentation, the compliance narratives. Instead, the organization supplies and practitioners participate in the application of copious quantities of the juice. Meanwhile the engineering and science teams get on with what they consider the real work of safety and security, largely disconnected from the governance function. The practitioners in that silo are not incompetent. They're structurally irrelevant though. And eventually they burn out and leave.

Effective AI governance requires both. The organisation provides the conditions - the capacity. The practitioners do the work. If either one is weak, governance fails, just in different ways.

Practitioner Capability: Five Dimensions

Let's start with Practitioner Capability. The practitioner assessment asks: regardless of what your organisation provides, can you actually do this work?

I designed these five dimensions around the ways I've seen governance practitioners fail. Not the obvious ways, like not understanding the technology. The subtler ways that determine whether governance work produces real outcomes or just produces documents.

1. Knowledge

The first thing to understand about AI governance knowledge is that nobody has all of it. The field sits across technology, regulation, risk management, ethics, and organisational governance. You don't need to be an expert in all of those domains. But you need enough depth in each to ask the right questions and to notice when something doesn't add up. If you know the EU AI Act inside out but can't read a system card, you're going to struggle. If you can evaluate model performance but have never thought through a risk assessment or regulatory obligation, same problem. The gaps matter more than the peaks.

This is also the dimension where self-assessment is least reliable, because people tend to confuse familiarity with competence. You've read about bias. You've attended a webinar on the AI Act. You've got your IAPP, ISACA or other certification. You feel like you know this stuff. But the real test isn't what you've read. It's what happens when you're in the room. Could you hold your own in a conversation with your engineering team about model evaluation, bias, and data drift? Do you really understand the last conversation with Legal about how liability works through the complexities of your AI supply chain? You know whether your last conversation with engineering or legal went well or whether you were out of your depth. The assessment is designed to surface that honest answer and distinguish between depth in one domain and adequate breadth across many.

2. Application

Application is the gap between knowing what governance should look like and being able to build it. A surprising number of practitioners can articulate a sophisticated governance framework but have never actually designed a risk triage process, built an inventory that people use, rolled out a use policy that engineers and business users follow, or conducted a review that examined system behaviour rather than just checking the completion of audit documentation.

This is a craft skill. It develops through doing, not studying. And it's fine if you haven't built governance mechanisms yet. Most people haven't. The field is young and the opportunities to do this work are still emerging. But be honest with yourself about where you are. Someone who has designed three governance mechanisms that failed and iterated on each one is further along than someone who has read ten books about governance, posts about it on LinkedIn every day, and has never designed anything. We're all learning together, and there's no shame in being early in that process. The only mistake is fooling yourself about it. Have you built something that works? Can you point to governance outcomes that happened because of what you built? If the answer is no, that's where you need to focus.

Building this kind of applied capability is what the Foundation Track of our Practitioner Program is designed for — four courses covering exactly the mechanism design, lifecycle governance, and policy writing this dimension assesses.

3. Resources

Application is a skill. Resources is your equipment. Application asks: can you design a risk process? Resources asks: do you have the templates, frameworks, tools, and practice guidance that let you do governance work at pace and at scale? A practitioner without resources reinvents everything from scratch. They spend time building templates that already exist elsewhere. They design processes without reference to established frameworks. They make decisions without the benefit of the collective experience captured in standards and practice guidance. They work slowly, produce inconsistent outputs, and miss things that a well-resourced practitioner catches as a matter of course.

Resources are not the same as tools. A practitioner who has purchased an expensive governance platform but can't configure it properly has a tool, not a resource. A resource is something you can actually use effectively to make your work better. That might be a well-designed spreadsheet template or a commercial platform, depending on the context. Your professional network and community are resources too. The practitioners you can call when you're stuck on a problem, the peers who've already solved something similar, the community you contribute to and draw from. Governance is too cross-functional and too fast-moving for anyone to figure it all out alone. What matters is whether you've assembled the professional equipment and relationships you need and know how to use them.

4. Influence

This is the dimension that I find governance training programs almost never address, and it's often the one that determines whether a practitioner's work actually changes anything. AI governance is inherently cross-functional. You need to engage engineers, product managers, legal counsel, risk professionals, and senior leadership. Each group has different priorities, different vocabulary, different incentives. A practitioner who can't bridge these differences produces governance that sits in a silo: maybe technically sound, but disconnected from the decisions it needs to influence.

Influence is not about authority. It's about the ability to get things done in an organisation where you rarely have direct control over the people who need to act. Can you explain a complex AI risk to a board in terms they care about? Can you sit with an engineering team and have a conversation about model evaluation that earns their respect? Can you navigate a room where legal, product, and engineering have conflicting priorities and help them reach a decision everyone can work with? I find the best way to assess this is to think about a specific story - when you last raised a governance concern with the business or engineering, what happened? That question has an honest answer and most practitioners know exactly what it is.

5. Judgment

Judgment is the hardest dimension to develop and the most important one to get right. It's what separates a practitioner who applies governance mechanically from one who applies it wisely. Every AI governance situation involves trade-offs, ambiguity, and competing considerations. A customer-facing generative AI system that interacts with vulnerable populations demands fundamentally different governance intensity than an internal analytics dashboard. Even at a novice level, a practitioner who applies the same process to both is wasting resources on one and probably undercooked on the other. Judgment means reading the context and calibrating the response.

It also means recognising the difference between technical and adaptive challenges, something I draw from Ronald Heifetz's work on leadership. A technical challenge has a known solution: you need a monitoring tool, you need to fix a data pipeline, you need to document a process. Apply expertise and execute. An adaptive challenge requires people to change how they work, what they value, or how they think about risk. You can't solve an adaptive problem by implementing a new tool. You have to help people through a process of change. Misdiagnosing adaptive challenges as technical ones is one of the most common and most expensive mistakes in governance. You implement a new review process. People route around it. Nothing changes. Because the problem was never the process. It was the culture. And culture is an adaptive challenge.

Across the assessment data, three transitions keep appearing. They're the points where practitioners get stuck across these five dimensions. I wrote about them in The Three Leaps in Your AI Governance Career.

Organizational Capacity: Five Dimensions

The organizational assessment turns from the individual to the organization. It asks whether your organization has the foundations to govern AI effectively. Not whether your governance is perfect, but whether the conditions exist for governance to function and improve.

1. Visibility

Do you know what AI you have, what it does, who it affects, and where the consequential risks sit? Everything depends on visibility. If you don't know what AI systems exist in your organisation, you can't assess their risk, you can't govern their development, and you certainly can't demonstrate compliance to an auditor who comes asking. And in most organisations I've worked with, the gap between what leadership believes is happening with AI and what is actually happening is enormous. AI is being built by engineering teams, procured from vendors, embedded in SaaS products, and adopted informally by employees using tools that nobody in governance even knows about. Shadow AI is everywhere, and it's growing.

At one end of the maturity scale, nobody can answer the question "what AI systems do we have?" with any confidence. At the other end, the AI inventory is a living governance mechanism, woven into development and procurement workflows, triggering risk triage when new systems appear and reassessment when existing ones change. The difference is not just completeness. It's whether visibility is a snapshot someone took six months ago or a capability that keeps pace with how your organisation actually uses AI.

2. Leadership

Does your organisation have the leadership capacity to make AI governance happen? Yes, somebody needs to own this. Not a committee, not a shared responsibility, not a line item in someone's job description alongside fifteen other priorities. A named individual with authority, budget, and a mandate the rest of the organization actually recognises. That structural ownership matters.

But leadership in this context is not really about position in the org chart. It's about the capacity to motivate and mobilise action. In an adaptive organisation, that can happen at any level. An engineer who pushes back on a deployment because the risk assessment is incomplete is exercising governance leadership. A product manager who insists on involving the governance team early rather than treating review as a gate at the end is exercising governance leadership. The question is whether the organization enables and rewards that behaviour, or whether it treats it as friction. I place culture within the leadership dimension deliberately, rather than treating it as a separate concern. When teams treat governance as a box-ticking exercise, or when engineers route around governance processes because they see them as obstacles, or when people don't feel safe raising concerns about AI systems, those are cultural problems that require a leadership response.

3. Risk

Can your organisation identify, assess, and respond to AI-related risks before they become incidents? This dimension covers the full spectrum of what could go wrong with your AI systems: technical risks like model drift and data quality, ethical risks like bias and lack of transparency, operational risks like system failures and security vulnerabilities, and regulatory risks including your obligations under frameworks like the EU AI Act. Compliance sits inside this dimension rather than alongside it, because understanding and meeting your regulatory obligations is one aspect of managing risk, not a separate activity.

The question is how your organisation does this in practice. Some organisations don't do risk assessment at all. Others do it periodically, running generic assessments on a quarterly schedule that may or may not reflect what's actually changed. The organisations that score highest here are the ones where risk sensing is continuous and event-driven: when a new system is proposed, when a model is retrained, when a regulation changes, the risk process activates and responds. And critically, it updates its own criteria based on what it discovers. A risk process that asks the same questions year after year regardless of what it's learned is not governance. It's just ritual.

4. Integration

Are functioning governance mechanisms embedded into how AI systems are actually built, deployed, and operated? This is where most organisations score lowest, in my experience. You can have policies, committees, risk registers, and compliance documentation. If none of it is connected to how AI systems are actually developed and operated, you have theatre. Integration asks whether governance mechanisms are woven into engineering and operational workflows as closed-loop systems. A mechanism senses something (a new system proposed, a model retrained, a performance threshold breached), triggers a decision, produces an action, and feeds the outcome back to improve future operation. That feedback loop is not something you add later. It needs to be designed in.

This dimension also covers tooling and infrastructure. Do you have the technical systems to support governance at the pace your teams deploy? An organisation trying to govern dozens of AI systems with documents, spreadsheets and shared drives will eventually drown in manual work, and governance will become the bottleneck that everyone warned it would be. When organizations complain that governance is too slow, it's commonly an integration problem.

5. Capability

Has your organization invested in the people who do governance, and does it have enough of them in the right places? This is where the organizational assessment connects most directly to the practitioner assessment. The practitioner assessment asks whether individuals have the knowledge, application skills, resources, influence, and judgment to do governance work. This dimension asks whether the organisation has created the conditions for those people to succeed. Has it hired or developed people with the right skills? Has it given them training, time, and mandate? And critically, has it spread governance capability beyond a central team into the functions where AI is actually built and operated?

AI governance can't be a silo. A small team that sits outside engineering or the business and reviews things after the fact is too slow, too adversarial, and too disconnected from how AI systems are actually built. You need people across engineering, product, legal, risk, and compliance who understand their governance responsibilities and contribute actively. The core governance team orchestrates. The network does much of the work embedded within their own functions. At the lowest maturity, governance responsibility is an unfunded mandate assigned to someone who already has three other jobs and no relevant training. At the highest, governance capability is distributed across the organisation: a competence embedded in engineering teams and product teams, not just a function.

Using the Framework

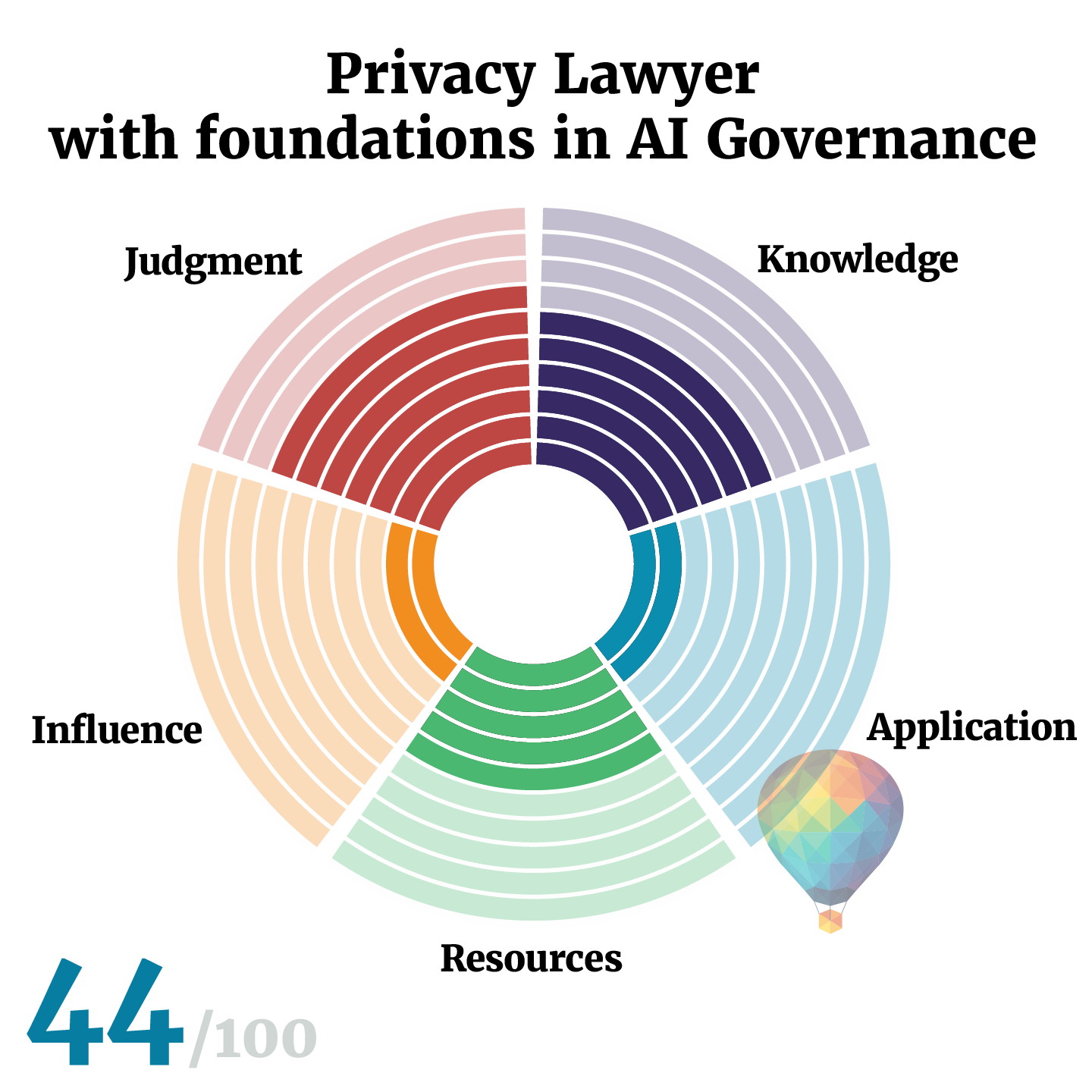

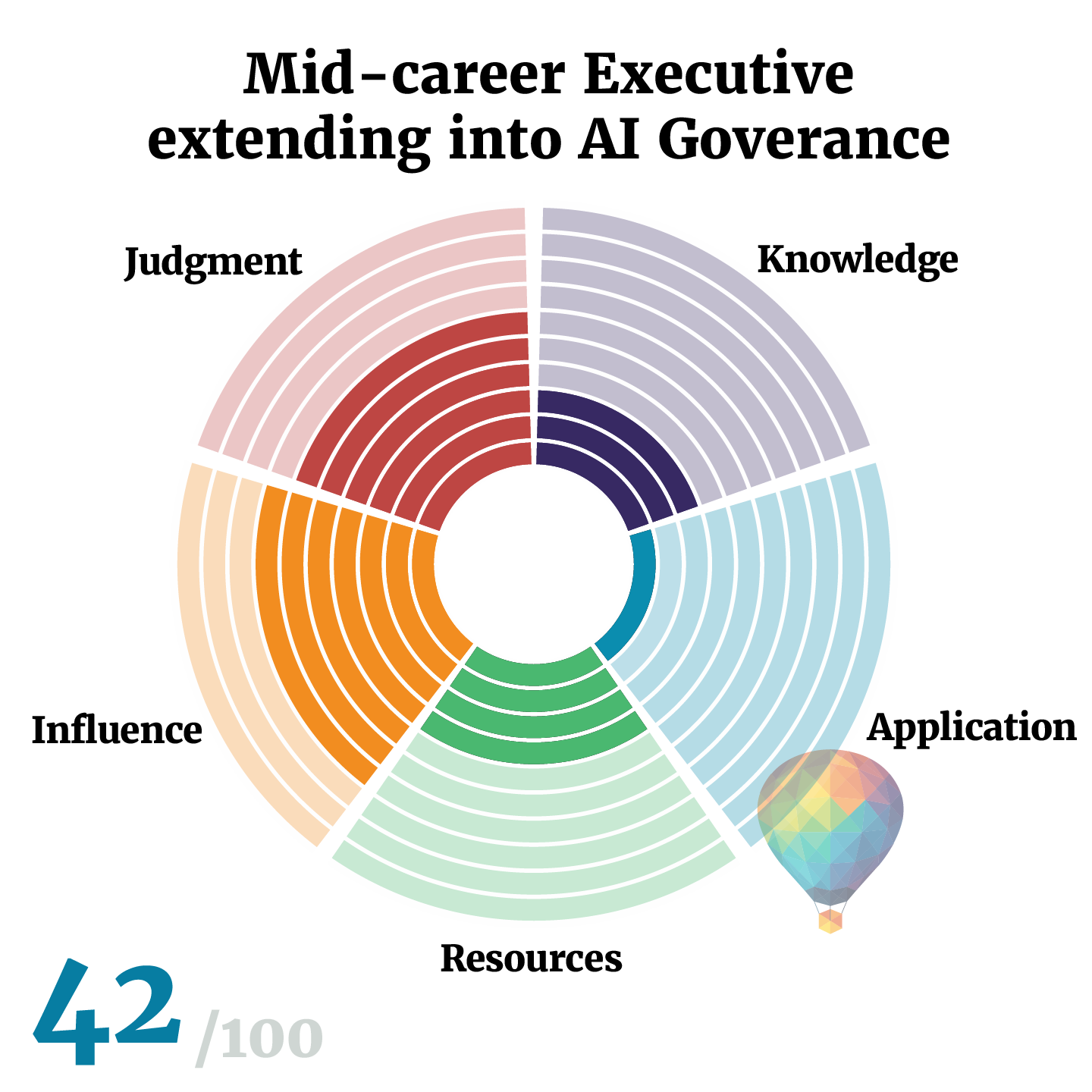

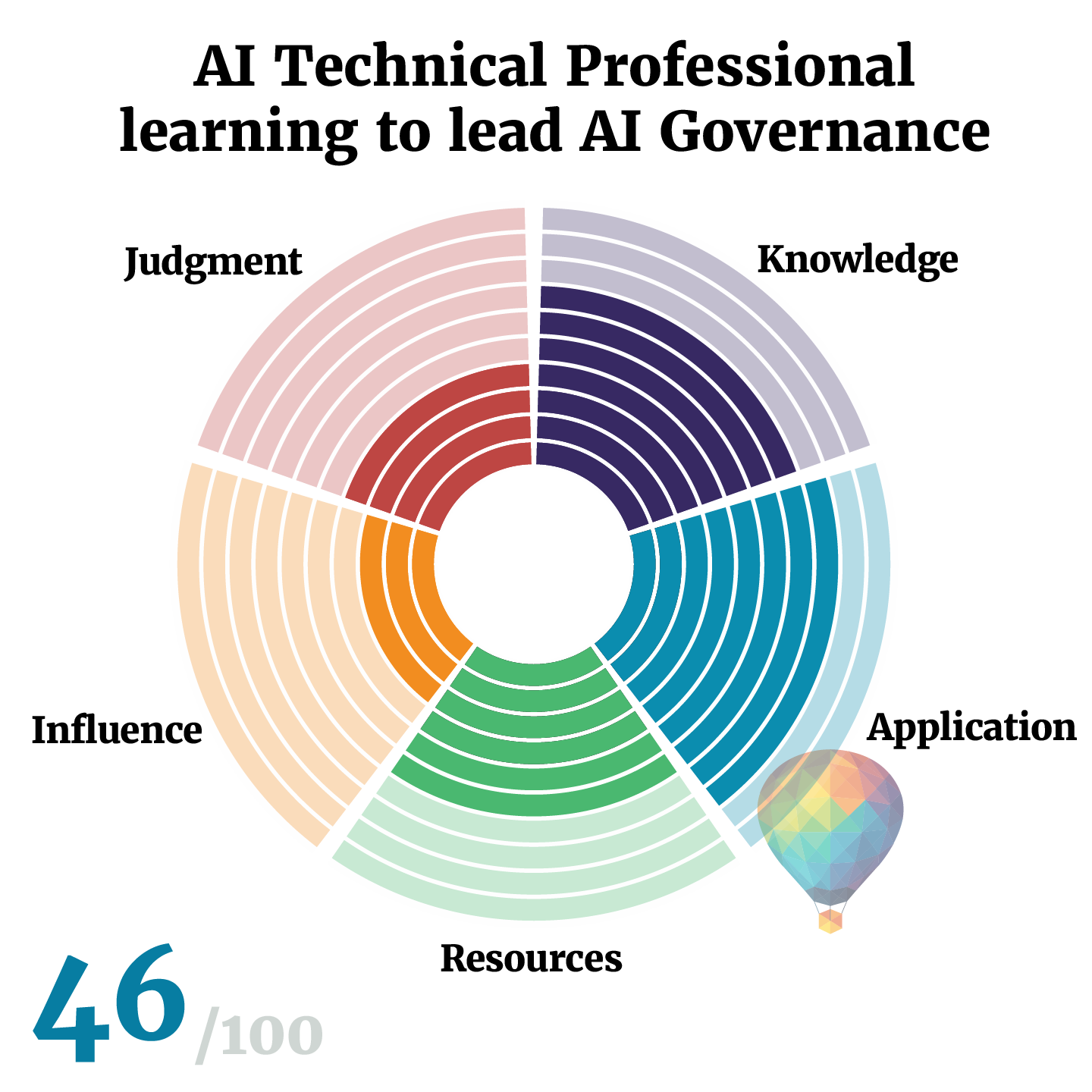

Both assessments use the same scoring approach. Four questions per dimension, each scored on a 0–5 scale. That gives 0–20 per dimension and 0–100 overall. The scores map to five maturity levels.

For practitioners, the levels run from Novice through Emerging, Developing, Proficient, to Expert. A Novice is someone new to AI governance. They may have deep professional experience in another domain, but they haven't yet developed the specific knowledge, skills, or relationships needed to do governance work. An Emerging practitioner has started building in some areas but still has significant gaps. Developing means you can do solid governance work across most dimensions: you know the standards, you've built things that function, you can engage across teams. Proficient is where your work consistently shapes organisational outcomes. And Expert is where your judgment, influence, and craft are mature enough to lead governance in complex, ambiguous environments and develop other practitioners. In our pilot use, most people taking the assessment for the first time seemed to land somewhere between Novice and Developing. That's real for where the field is right now.

For organizations, the levels run from Unaware through Aware, Developing, Functioning, to Adaptive. An Unaware organisation has no real governance in place. AI is being used, but nobody is systematically tracking it, assessing its risks, or governing its deployment. Aware means leadership recognises the need, but governance is still informal or reactive. Developing means formal governance is taking shape: roles, policies, processes exist, but they may be static or disconnected from how AI is actually built and operated. Functioning means governance is embedded into workflows and actively managing risk across the AI portfolio. And Adaptive means governance is a living system that learns and evolves as the AI portfolio, regulatory environment, and risk landscape change. The difference between Developing and Adaptive is the difference between having a governance program and having governance that actually works.

The dimension scores are far more useful than the overall total, because practitioners with roughly the same total score can have completely different profiles, and need completely different development paths.

Consider a privacy lawyer who has invested heavily in learning about AI. He knows the regulatory landscape cold, he's studied the technology, and he's developed strong judgment about proportionality and risk. His knowledge and judgment scores are high. But he's never built a governance mechanism. He's never designed a risk triage process or an inventory that engineering teams actually use. And while he's respected in legal circles, he struggles to get traction with product and engineering teams who see governance as a legal function telling them what they can't do. His application and influence scores are low. His development path is clear: stop studying and start building, and invest in the cross-functional relationships that turn analysis into action.

Now consider a mid-career executive moving into AI governance. She's spent years navigating complex organisations, building coalitions, and getting things done across functions. She has access to established frameworks, standards, and professional networks. Her influence, judgment, and resources scores are strong. But her technical knowledge of AI systems is shallow, and she's never designed a governance process from scratch. She's managed programmes, not built mechanisms. Her knowledge and application scores are low. Her development path is different: she needs to build genuine technical literacy and get her hands dirty with the craft of governance design.

Then there's the practitioner who has been doing AI governance work for a couple of years. She's built real things: risk processes, inventories, review frameworks. She knows the standards, she's assembled a solid toolkit, and she understands the technology. Her knowledge, application, and resources scores are all strong. But she's hitting a ceiling. Her recommendations are sound, but they don't always land. She's not yet in the rooms where the consequential decisions get made, and when novel situations arise that don't fit neatly into existing frameworks, she defaults to process rather than reading the context. Her influence and judgment scores are where the growth needs to happen. That's the difference between a competent practitioner and one who can scale their impact across an organisation.

Three different people. Three different profiles. Three completely different priorities.

The organizational capacity perspective

The same logic applies to organisations. Consider a large financial services firm that has invested heavily in governance structure. It has a Chief AI Officer, a governance committee, a dedicated budget, and clear executive sponsorship. Its leadership and capability scores are strong. But when you look more closely, the governance function operates in parallel to engineering rather than inside it. Risk assessments happen quarterly on a fixed schedule. The AI inventory was last updated when someone remembered to ask. Visibility and integration are where the weaknesses sit, and until those are addressed, the governance programme will continue to look impressive on paper while the engineering teams work around it.

Now consider a mid-sized technology company where a small group of engineers started building governance from the ground up. They've integrated risk triage into their deployment pipeline, they maintain a live inventory, and they've built monitoring that actually triggers action. Their visibility and integration scores are high. But there's no executive sponsor, no formal mandate, and no budget beyond what they've carved out of their own time. Leadership and capability are the gaps. The mechanisms work, but they're fragile because they depend entirely on the people who built them. If those people leave, the governance goes with them.

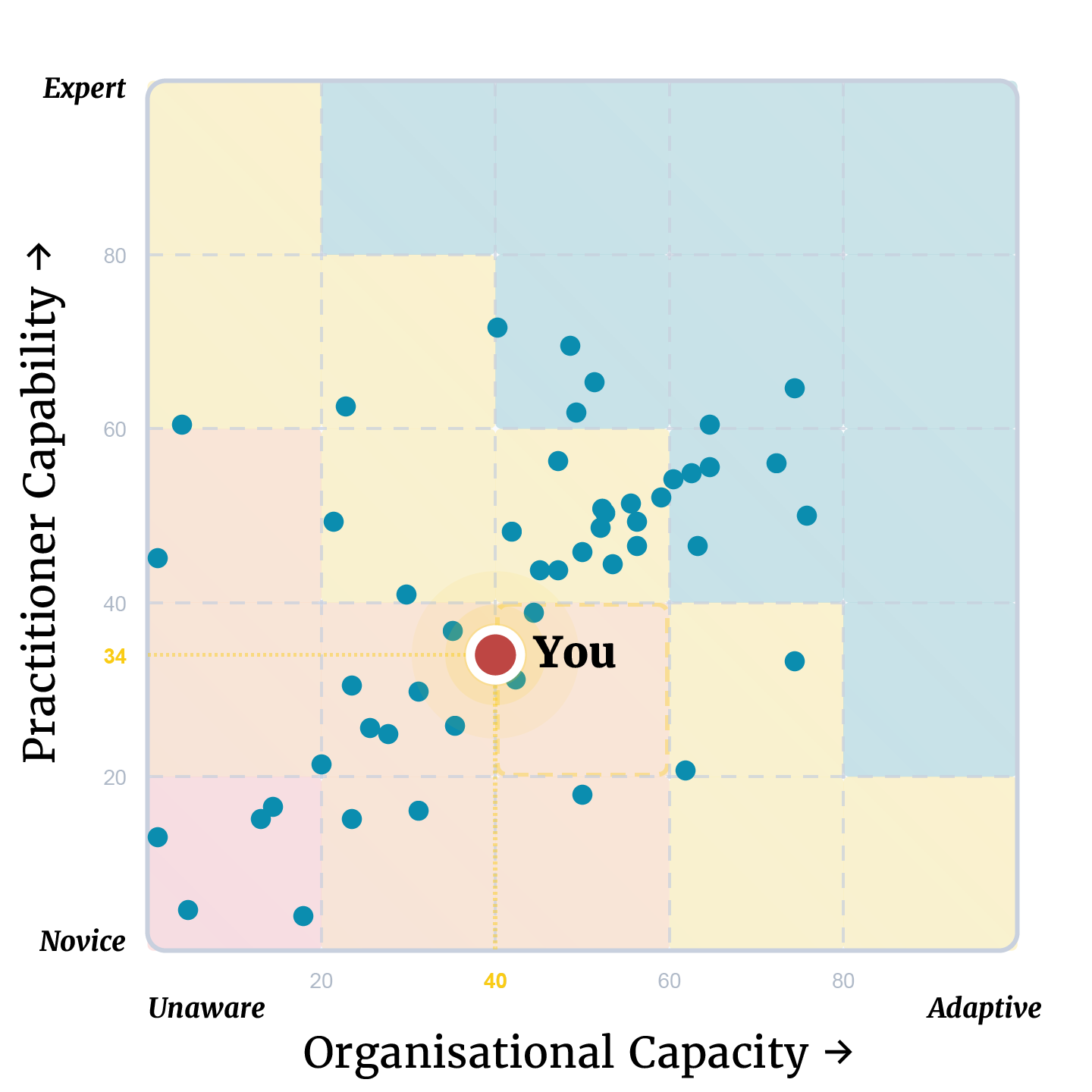

Plotting capability vs capacity

When you plot practitioner and organisational scores together, the picture gets richer than either assessment alone can provide. A strong practitioner inside an organisation with low capacity faces a difficult challenge: they know what to build, but they need to focus on making the case for investment, securing mandate, and building the organisational conditions that will let their work stick. A developing practitioner inside an organisation with strong capacity faces a completely different one: the structures and mechanisms are there, the leadership support exists, and the priority is their own professional development to make use of the opportunity in front of them.

The most common pattern I expect to see is somewhere in between: practitioners who are strong in some dimensions and weak in others, working inside organisations that have the same uneven profile. When you look at both sets of scores together, you can start asking better questions. Is the organisation's low integration score a structural problem, or is it because the practitioners lack the application skills to build mechanisms? Is the practitioner's low influence score about their own development, or is the organisation's leadership culture making it impossible for anyone to get traction? The dimension scores from both assessments, read side by side, start to untangle those questions.

This is something we spend time on in our Enterprise Coaching work. When we talk with an organisation's governance team, we find that the problems people have been attributing to practitioner capability are actually organisational capacity problems, and vice versa. A practitioner who seems to lack influence might actually be operating in an organisation where the leadership dimension doesn't enable action. An organisation that thinks it has an integration problem might actually have a capability problem: the governance team doesn't have the application skills to design mechanisms that engineering teams will adopt. Getting the diagnosis right matters, because the investment and work needed is completely different. You don't send someone on a training course to fix a leadership problem, and you don't restructure your governance committee to fix a skills gap. The cross-assessment view is what stops you solving the wrong problem.

Start With the Diagnosis

So that's the third article in what I could probably call a series about getting things moving in AI governance. The first made the case that the biggest risk is waiting too long to start. The second walked through how to build governance that actually works, while building AI itself. And this one has been about figuring out where you stand so you know what to do next.

If you've been reading along and thinking about how to get started, the assessment is the concrete first step. Both assessments are available free at assess.aicareer.pro. It takes about fifteen minutes. You get your results emailed with tailored recommendations and a plot of where you stand relative to peer practitioners and organisations.

I encourage you to take it and answer honestly - it's only useful as a diagnostic if you do. Look at the dimension scores, not just the total. Identify the gaps. And I'd recommend retaking it every six months. Your AI portfolio evolves. Regulations change. People join and leave. What needed investment six months ago might be strong now, and what was strong might be slipping.

The assessments give you some starting coordinates and some possible next steps. What you do with them is the real work.

But at least you'll know you're not wearing the juice.

Once you have your results

The Assessment tells you where you stand. The next question is what to do about it.

For practitioners who want to build real capability across the five dimensions, the AI Governance Practitioner Program is built around exactly the diagnostic logic this article describes. The Foundation Track covers organising for AI governance, working through the AI lifecycle, and writing the policies that hold up. The AIGP Exam Preparation Course sits alongside it for those building a credential as well as capability. Our Bundle combines both at the best value.

For organisations working through the capacity dimensions, Enterprise Coaching is where we work with leadership teams on the structural and cultural challenges this assessment surfaces.

Take the Assessment first. Then come back here and we'll point you to what fits.